25 zmienionych plików z 22 dodań i 35 usunięć

BIN

.DS_Store

BIN

Federated_Avg/.DS_Store

+ 22

- 35

FedNets.py → Federated_Avg/FedNets.py

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

+ 0

- 0

README.md → Federated_Avg/README.md

+ 0

- 0

Update.py → Federated_Avg/Update.py

BIN

Federated_Avg/__pycache__/FedNets.cpython-36.pyc

BIN

Federated_Avg/__pycache__/Update.cpython-36.pyc

BIN

Federated_Avg/__pycache__/averaging.cpython-36.pyc

BIN

Federated_Avg/__pycache__/options.cpython-36.pyc

BIN

Federated_Avg/__pycache__/sampling.cpython-36.pyc

+ 0

- 0

averaging.py → Federated_Avg/averaging.py

BIN

Federated_Avg/local/events.out.tfevents.1543212153.Nitros-MacBook-Pro.local

+ 0

- 0

main_fedavg.py → Federated_Avg/main_fedavg.py

+ 0

- 0

main_nn.py → Federated_Avg/main_nn.py

+ 0

- 0

options.py → Federated_Avg/options.py

+ 0

- 0

sampling.py → Federated_Avg/sampling.py

BIN

data/.DS_Store

+ 0

- 0

data/cifar/.gitkeep

+ 0

- 0

data/mnist/.gitkeep

BIN

save/.DS_Store

+ 0

- 0

save/.gitkeep

BIN

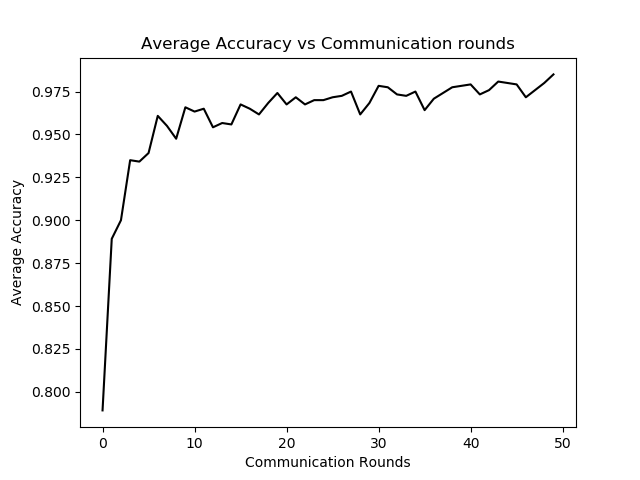

save/fed_mnist_cnn_2_C0.1_iid1_acc.png

BIN

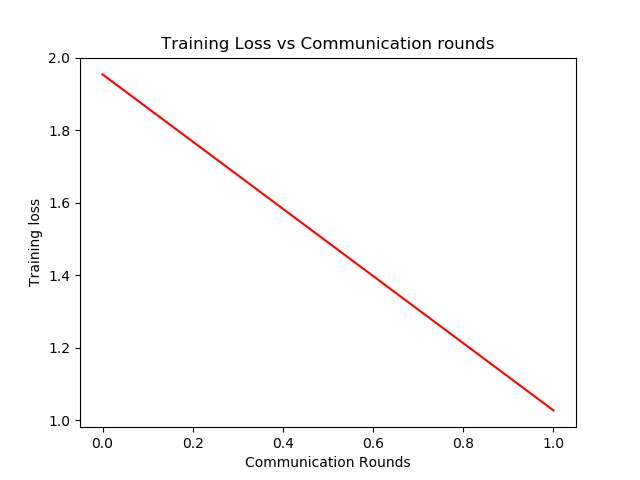

save/fed_mnist_cnn_2_C0.1_iid1_loss.png

BIN

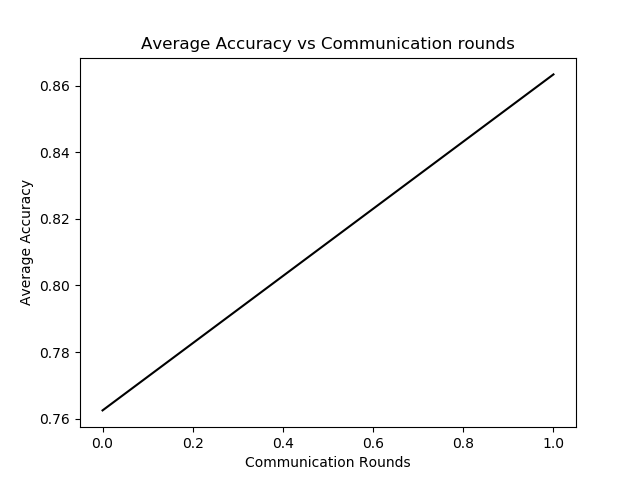

save/fed_mnist_cnn_50_C0.1_iid1_acc.png

BIN

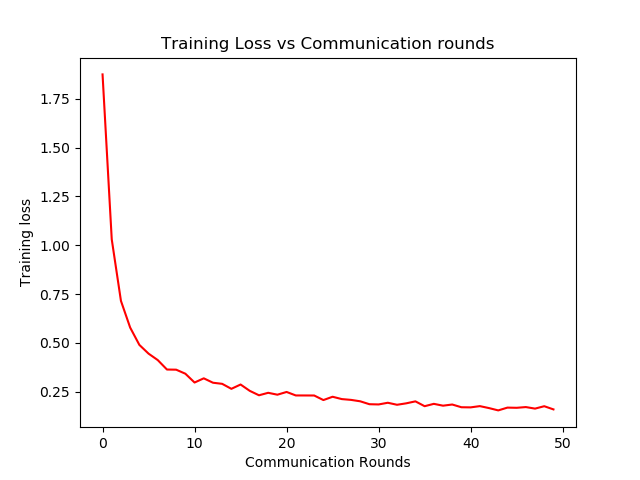

save/fed_mnist_cnn_50_C0.1_iid1_loss.png